2022: My publications in review

I’m a little late to post it, but in keeping with my annual tradition, here’s a rundown of all the papers I published in 2022. There were a lot of them especially related to two of my main projects. The first is the VLRC Project, which was our older corpus of comics which we had several papers analyzing this year. The second is my project developing a multimodal model of the language capacity with Joost Schilperoord. (… and books on each are coming soon). So, here’s my papers from 2022:

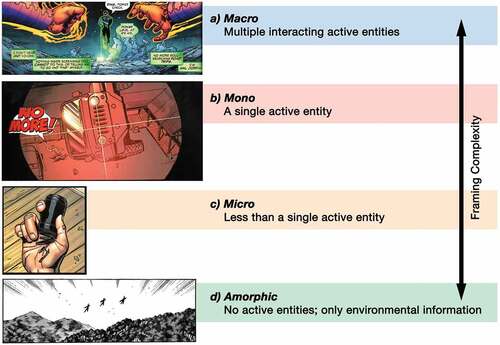

The framing of subjectivity (with Irmak Hacımusaoğlu and Bien Klomberg) – This paper looked at a corpus of subjective viewpoints in European, Asian, and American comics and showed that they align with more “focal” framing.

Linguistic Typology of Motion Events in Visual Narratives (with Irmak Hacımusaoğlu) – This paper analyzed various comics from around the world and showed a relationship between how languages encode motion events and how they are depicted in comics from speakers of those languages.

Picture perfect Peaks (with Bien Klomberg) – In several experiments, this paper examined the reading times to different panels that motivate readers to generate inferences, and found that inference generation varies along several dimensions, especially explicitness.

Running through the Who, Where, and When (with Irmak Hacımusaoğlu and Bien Klomberg) – This paper analyzed how different dimensions of meaning (time, space, characters) continuously extend across sequences of panels, and how they differ across comics from Europe, Asia, and America.

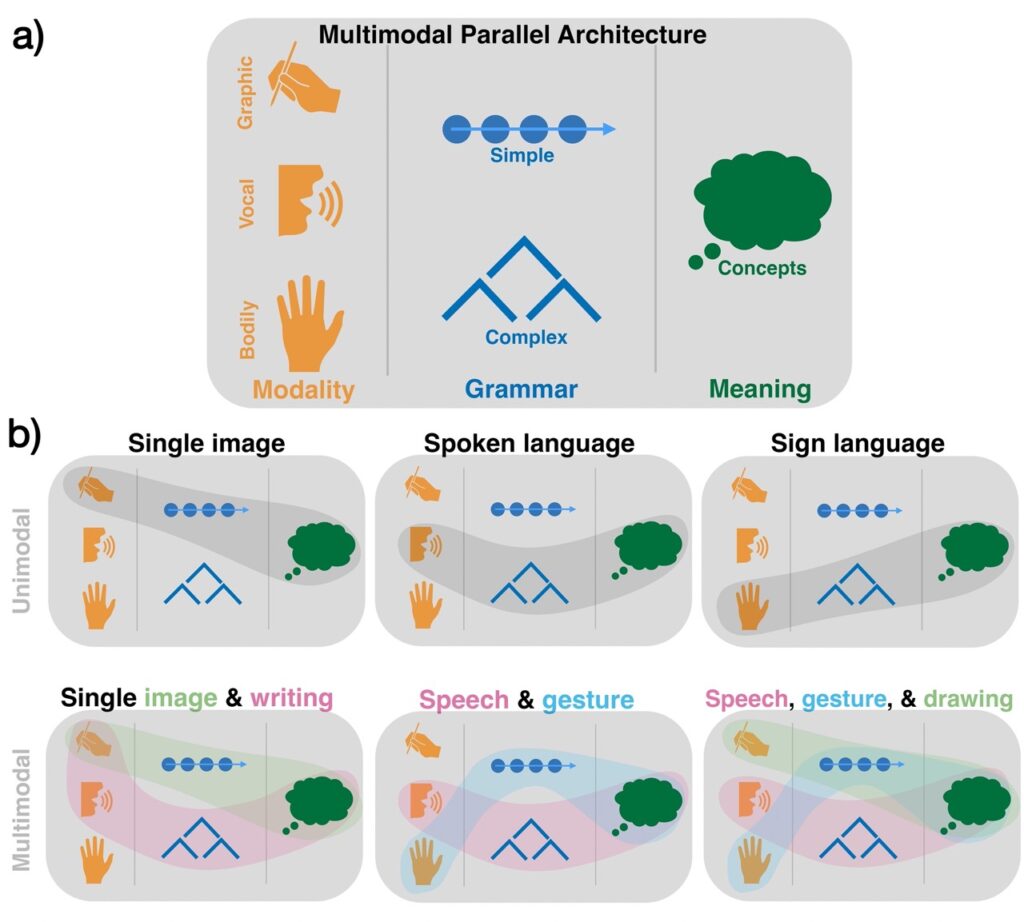

Reimagining language (with Joost Schilperoord) – In this short piece for Cognitive Science, we call for a new paradigm in the cognitive and language sciences recognizing that is not “amodal”, and that natural human communication is multimodal.

Remarks on multimodality (with Joost Schilperoord) – This paper presents our revised multimodal model of language and communication, and delves into our classifications for how grammars interact with each other across the dimensions of symmetry and allocation.

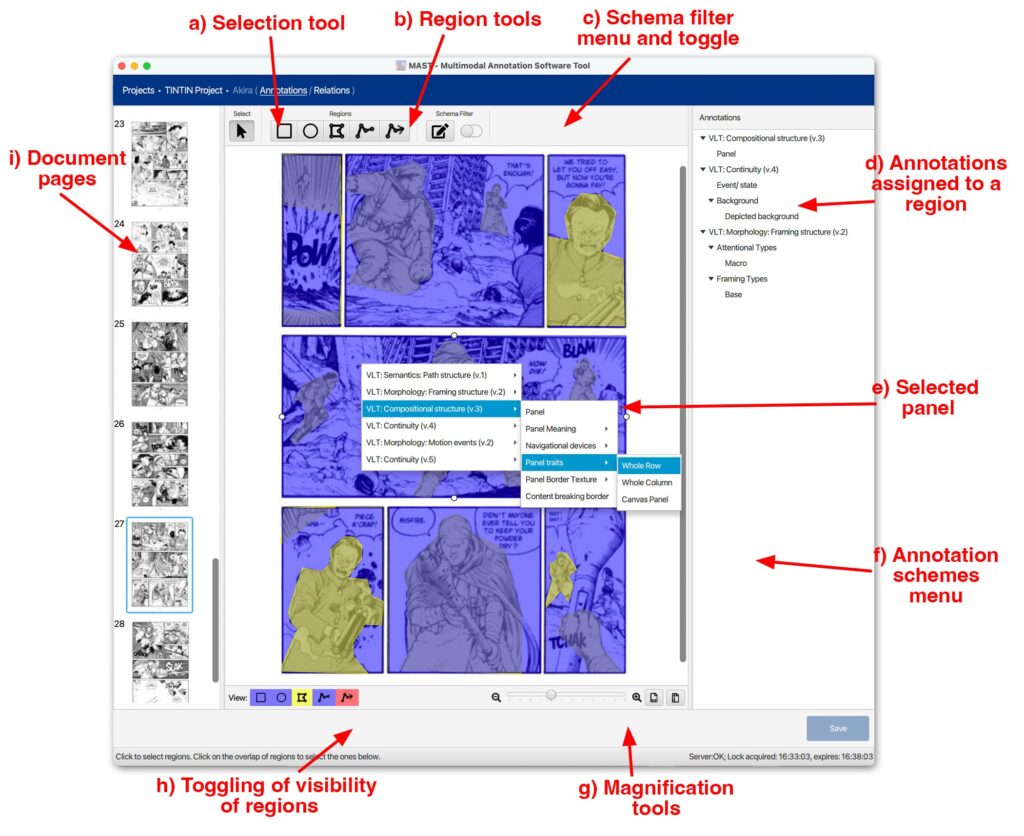

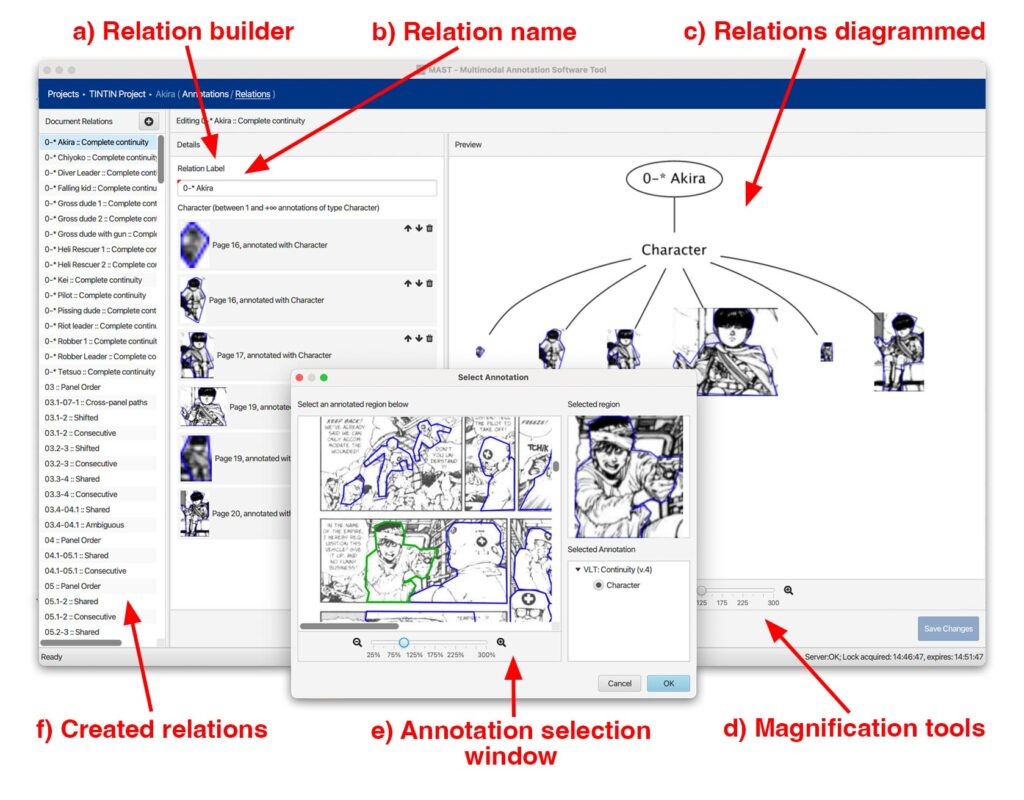

The Multimodal Annotation Software Tool (MAST) (with Bruno Cardoso) – This paper describes our annotation software tool, MAST, which was designed for data collection for the TINTIN Projec.

Before: Unimodal linguistics, After: Multimodal linguistics (with Joost Schilperoord) – We here analyze two picture patterns which convey “before-after” states in memes, cartoons, and advertising. We we show that this is a general construction with systematic tendencies and variation.

An electrophysiological investigation of co-referential processes in visual narrative comprehension (with Cas Coopmans) – This analysis of brainwave data showed that co-referential connections across panels (connecting zooms to the same characters in other panels) seems to engage similar neural processing as co-reference in sentence processing.

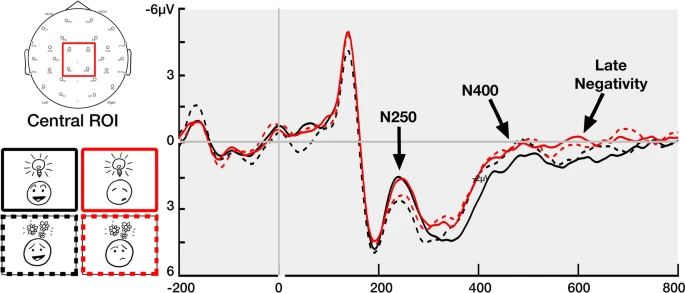

Meaning above (and in) the head (with Tom Foulsham) – We here present experiments measuring reaction times and brain waves for the “upfixes” like hearts or gears that float above charcaters’ heads, and we show they are systematic and constrained abstract visual lexical items.

Comments